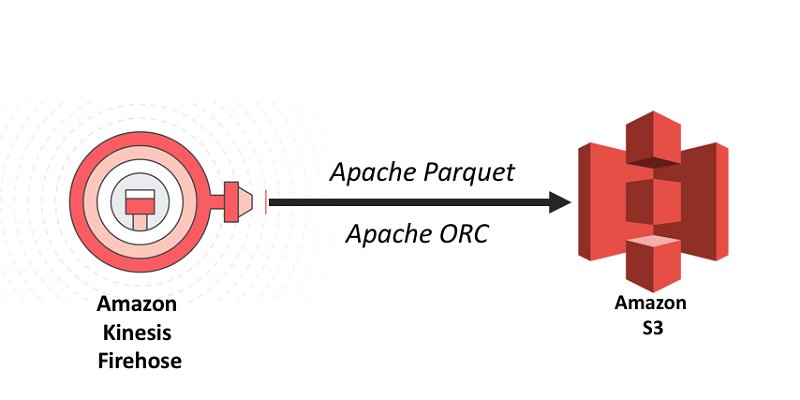

Amazon Web Services on Twitter: "Convert your data to Apache Parquet or ORC on-the-fly in Kinesis Data Firehose before delivery to your data lake in Amazon S3. https://t.co/qamBf2kcCq https://t.co/M4ePevzVlj" / Twitter

Analyze Apache Parquet optimized data using Amazon Kinesis Data Firehose, Amazon Athena, and Amazon Redshift | AWS Big Data Blog

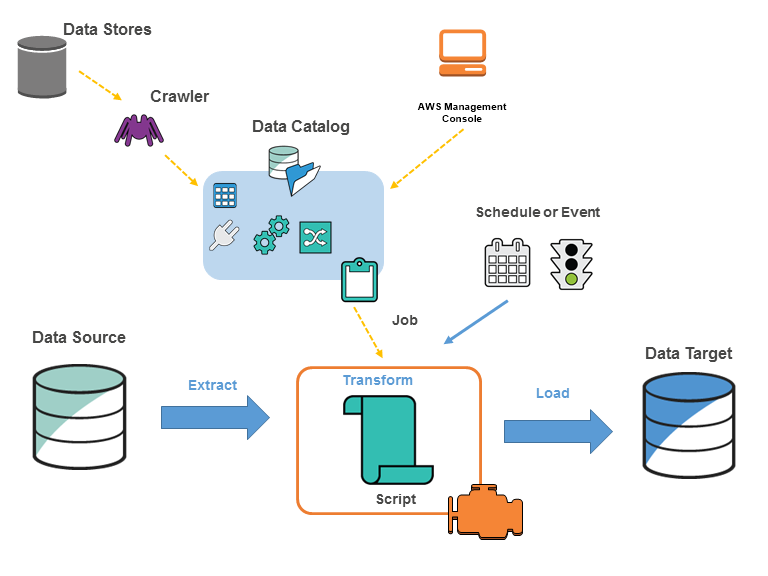

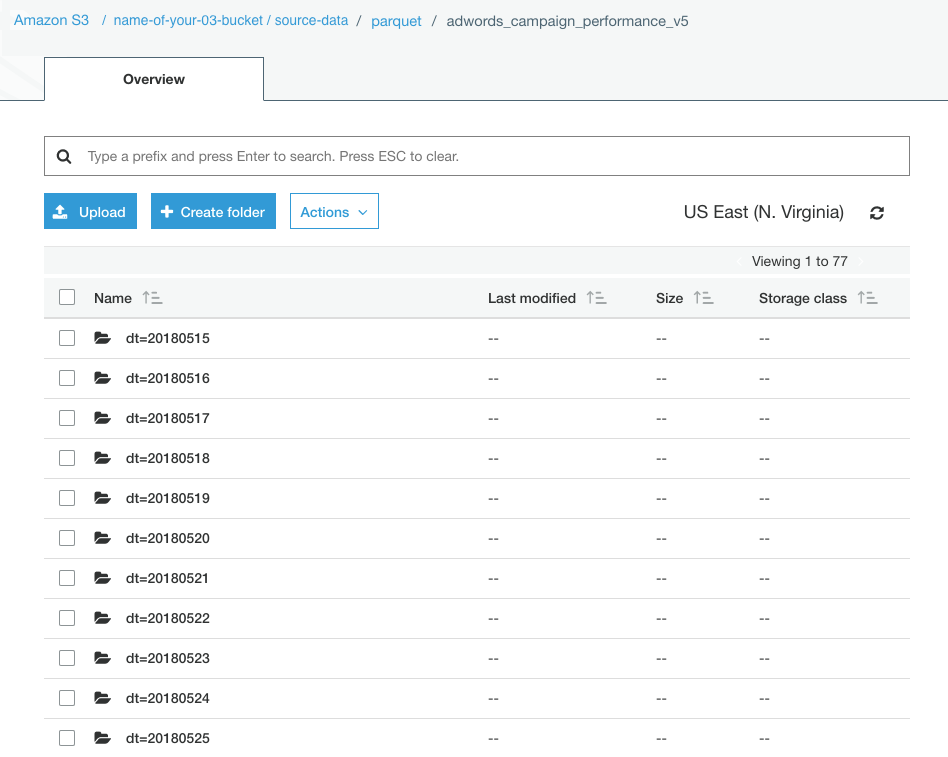

How To Process, Organize and Load Your Apache Parquet Data To Amazon Athena, AWS Redshift Spectrum, Azure Data Lake Analytics or Google Cloud | by Thomas Spicer | Openbridge

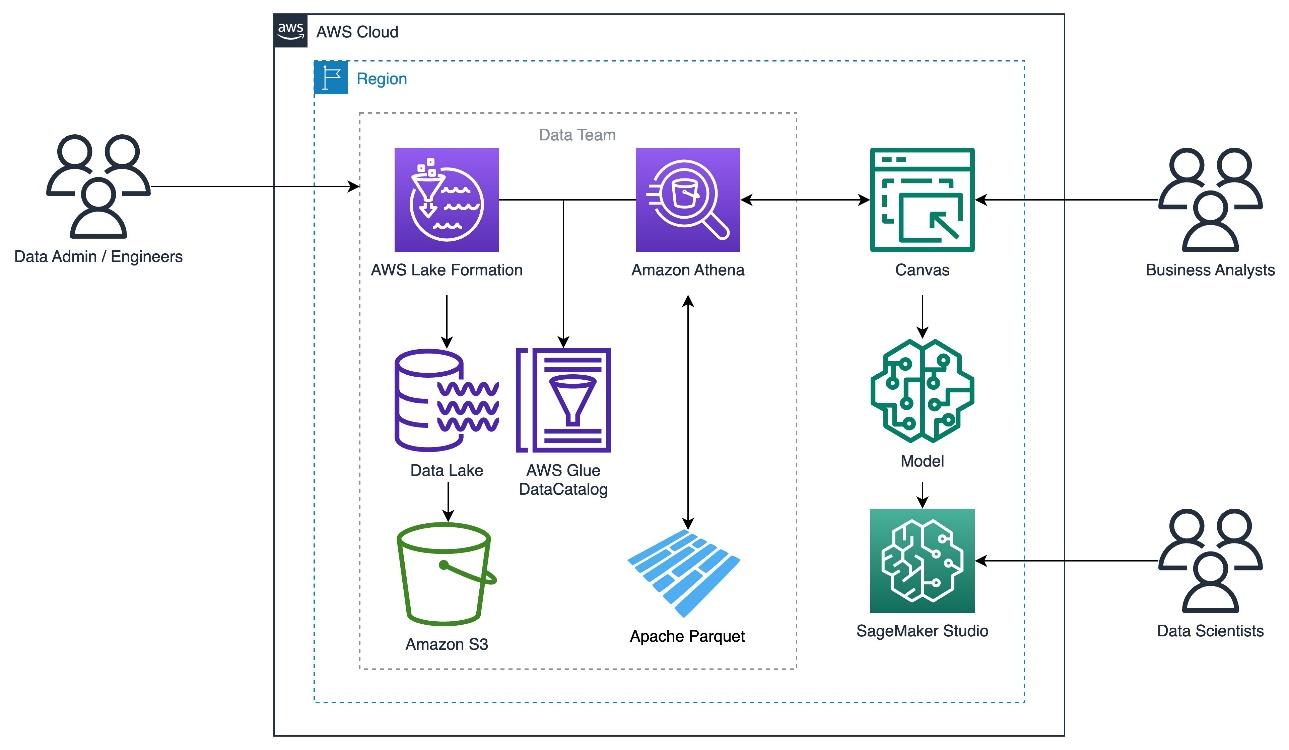

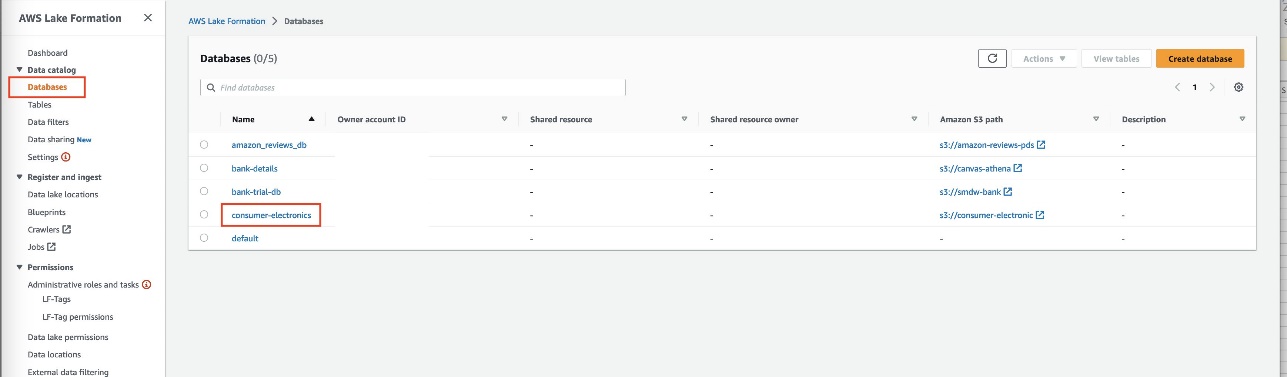

Use Amazon SageMaker Canvas to build machine learning models using Parquet data from Amazon Athena and AWS Lake Formation | AWS Machine Learning Blog

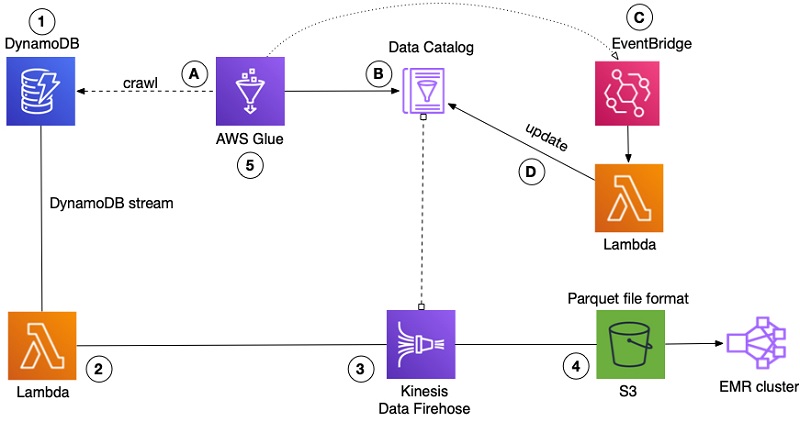

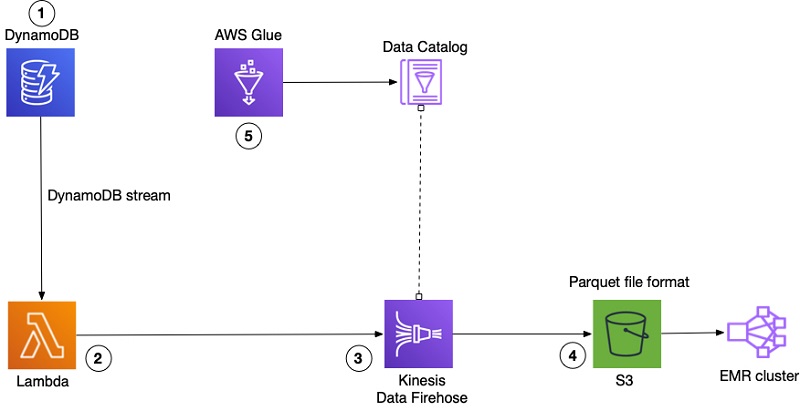

Export and analyze Amazon DynamoDB data in an Amazon S3 data lake in Apache Parquet format | AWS Database Blog

How Goodreads offloads Amazon DynamoDB tables to Amazon S3 and queries them using Amazon Athena | AWS Big Data Blog

Parquet Solid Color French Wool Beret. Classic French, Casual and Chic Lightweight Beige at Amazon Women's Clothing store: Women Wool Beret

How FactSet automated exporting data from Amazon DynamoDB to Amazon S3 Parquet to build a data analytics platform | AWS Big Data Blog

Amazon.com: Miele XL Parquet Twister, Attachable Vacuum Floorhead for Sensitive Hard Floors, 16 Inches : Home & Kitchen